In this post I’ll focus on what Rails has to offer for helping minimising the number of SQL queries.

First of all be sure to have rack-mini-profiler installed for checking which SQL queries are being executed per page. Also, don’t forget to enable caching when needed (bin/rails dev:cache)

-

Counter caching. Handy for avoiding SQL counts. Have in mind that the counter cache only gets updated when creating/destroying records so be careful if using soft deletion in your models. Use

sizeinstead ofcountif using counter caching (countwill always perform a SQL query) -

JSON caching. If using Jbuilder it’s easy to setup caching strategies. For avoiding rendering partials here is a little trick: https://coderwall.com/p/zn-gkq/cache-your-partials-not-the-other-way-around

-

Association caching. Sometimes your association may be already loaded, so instead of using AR methods that could produce more queries you could rely on Array/Enum methods instead (e.g.

wherevsselect) -

Think about relationships from the user point of view. This happened to us in production. As an example: if in your blog you have a list of articles and want to show which article is liked by the current user you could do:

-

post.favorites.exists?(user: current_user)This will produce N+1 query. Or -

current_user.post_favorites.any?(post_id: post.id)Only one query for loading all the favorites (exists will always do a SQL query). Depending on the situation you may not want to load a big table into memory.

If you’re not sure about your relationships check https://github.com/preston/railroady for generating UML diagrams for your models.

-

-

Testing caching. Clean code should come with clean tests. If you do caching sometimes it could be useful for your coworkers to know what your code is about and for avoiding regressions. Here is an example setup for RSpec:

module CachingHelpers

# INFO: helper for accessing key/value pairs included in the cache

#

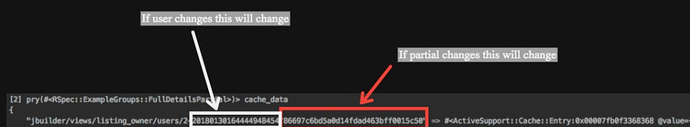

# cache_data #=> {"jbuilder/views/users/1-2018...": <user_partial content>}

def cache_data

Rails.cache.instance_variable_get(:@data)

end

end

RSpec.configure do |config|

config.include CachingHelpers

config.before(:each, :caching) do

allow(::Rails).to receive(:cache).and_return(ActiveSupport::Cache::MemoryStore.new)

Rails.cache.clear

end

config.around(:each, :caching) do |example|

caching = ActionController::Base.perform_caching

ActionController::Base.perform_caching = true

example.run

ActionController::Base.perform_caching = caching

end

end

Example expectation:

expect(cache_data.keys).

to include(%r{jbuilder/views/users/#{user.id}})

-

Fragment caching. With the above setup it’s easier to understand what actually gets into the cache (keys & values) as well as being able to test fragment caching. Example output: